A fair comparison of modern models' reasoning capabilities

December 22, 2023

The convergence of models' interfaces on the Chat Message API as well as the global push toward larger context sizes opens an interesting opportunity to compare recent models in a perfectly equivalent setup on a reasoning-heavy dataset: MATH.

Models

We present an evaluation of the following models on the MATH dataset:

- Anthropic:

claude-instant-1.2claude-2.1 - Mistral:

mistral-medium(unreleased)mistral-small(the 8x7B) - Google:

gemini-pro - OpenAI:

gpt-4-1106-previewgpt-3.5-turbo-1106

All models are aligned to follow instructions through a chat interface. All have a context size of 32k tokens or more. The interface their API present are similar, converging on the Chat Message interface introduced by OpenAI (see code).

We did not evaluate

llama-2-chat because of its limited 4k context size, which would have resulted in an unfair comparison (as our methodology relies on at least ~8k context size).Dataset

The MATH dataset consists of mathematical questions with a unique numerical response. The dataset includes for each question a reasoning as well as an answer. These exercises are at high-school Olympiad / early-undergrad Olympiad levels, making this dataset a great probe in the reasoning capabilities of models.

This dataset also comes with limitations. It is sourced from actual US Olympiad problems (AMC, AIME, ...) that are discussed online, leading to unavoidable contamination, potentially favoring larger models. Despite this intrinsic limitations it remains one of the best dataset to evaluate models reasoning capabilities.

Limitations

We use for our evaluations a random sample of 128 problems (balanced over categories and difficulty levels) from MATH test split. 128 is definitely a small number to evaluate on the MATH dataset, but the goal of this project is first and foremost to compare models, more than to compute a precise evaluation metric on MATH for each of them.

Evaluation

We evaluate using Chain-of-Thoughts (CoT) prompting with consensus. CoT prompting means that we incentive (through few-shot prompting) the model to provide an explanation (a proof here) before producing an answer. This technique is known to help models produce better answers. Consensus means that we run this process multiple times per problem (leading to a variety of responses due to models stochasticity) and pick the answer that was generated the most for each problem. For each execution within a consensus pool we sample different train set problems to use as few-shot examples to improve diversity.

The most interesting part of this project, is that the exact same messages were sent to all models, making this model comparison a "bit-wise" model comparison, only possible thanks to the recent convergence by all model providers to a unified chat-based interface and alignment process.

We use seeded random generators throughout the code to guarantee that the exact same messages were sent to all 7 models. We perform a total of

32*128=4096 queries per model to perform the evaluations. The cost of running one evaluation is approximately $50-$100 depending on each model pricing.

An example "query" can be found at the end of this blog post (Appendix A). The evaluation code is available here: https://github.com/dust-tt/dust/tree/main/x/spolu/research/evals

Note

MATH is known for having noisy notations for the final answer generally presented using a

\boxed{} LaTeX directive (eg: \boxed{frac12} vs \boxed{frac{1}{2}}), which interferes with the consensus mechanism. Instead of implementing an inevitably brittle sanitization function, we used GPT-4 to sanitize the answers of the train and test splits. The main advantage to use a model is that we can provide the same sanitization or formatting instructions to the evaluated models, significantly reducing the chance of false-negatives . The sanitized test problems can be found here.Results

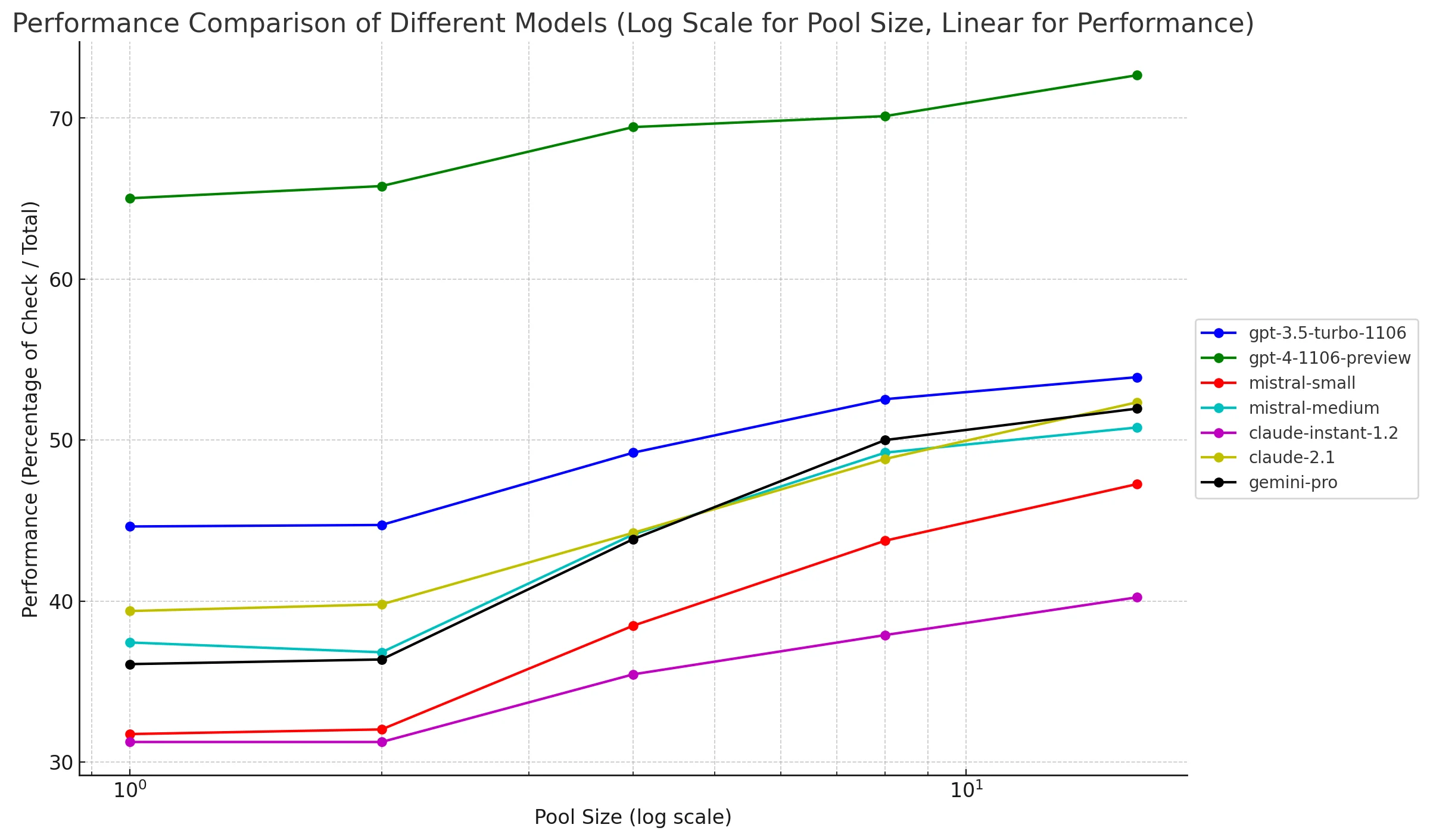

The raw results are presented in the table below. The numbers are the averaged number of successfully answered problems at each consensus pool size (out of 128 problems).

We run our evaluations with a pool size of 32 (this is the consensus part) which means we get pretty good estimates of the "pass-rate" (percentage of successes) for smaller pool sizes (as we can average on multiple pools) but only an bare estimate for pool size 32.

The associated pass-rates (success rates) are presented in the graph below (omitting pool size 32).

Conclusion

It is critical to our mission at Dust to keep a close eye on models' performance and how they compare. The convergence of all model providers toward larger context and the unified Chat Message interface, opened a unique opportunity to perform a "bit-wise" comparison on the MATH dataset.

The results are pretty clear. OpenAI models remain far ahead of competition when it comes to reasoning. But it is also extremely exciting to see the progress of Mistral, with

mistral-medium matching claude-2.1 and gemini-pro!Subscribe for further update on this evaluation project. We'll deep-dive in the effect of consensus, it's limitation and much more in upcoming posts.